Highlights of SGLang at NVIDIA GTC 2026

SGLang came to NVIDIA GTC 2026 with panels, a happy hour, a 200-person meetup, and a hands-on training lab. Three days, five events, one packed week at the center of the LLM ecosystem and left with a lot to share. If you missed it, here's the full recap.

SGLang at GTC 2026: five events, three days.

At the Main Conference

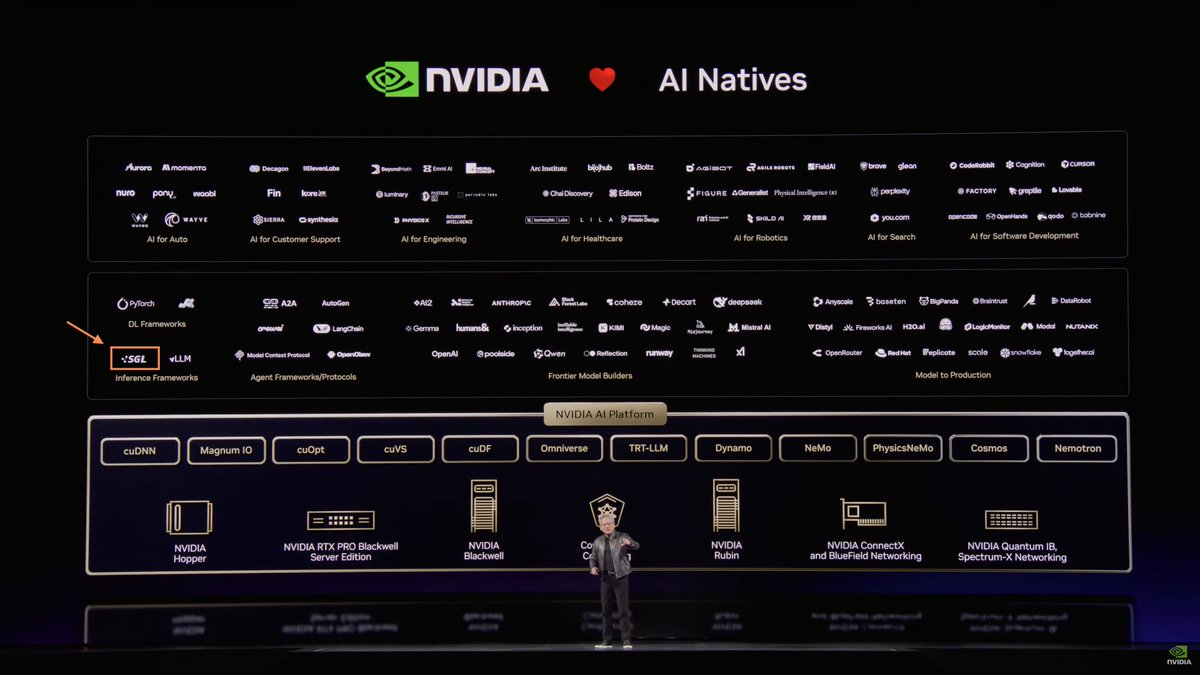

SGLang Featured in the GTC Keynote

SGLang was featured on the NVIDIA AI ecosystem slide during Jensen Huang's GTC keynote. We are honored to be recognized as part of the infrastructure stack behind AI-native applications.

SGLang on NVIDIA's AI ecosystem slide during the GTC 2026 keynote.

Open-Source AI Panel at GTC

On Tuesday, Ying Sheng joined the GTC panel "The State of Open-Source AI" alongside Vartika Singh (Strategic AI Lead, NVIDIA), Jonathan Cohen (VP of Applied Research, NVIDIA), Ion Stoica (Professor, EECS, UC Berkeley), Jeff Boudier (VP of Product, Hugging Face), and Ranjay Krishna (Director of Multimodal and Embodied AI, Ai2).

The panel examined open-source AI's growing role as the primary R&D engine for sophisticated AI systems: what makes open ecosystems trustworthy, scalable, and production-ready, and the community infrastructure enabling reproducible, auditable research.

Ying Sheng (second from left) on the "The State of Open-Source AI" panel at GTC 2026.

🎬 Watch the recording on NVIDIA On-Demand

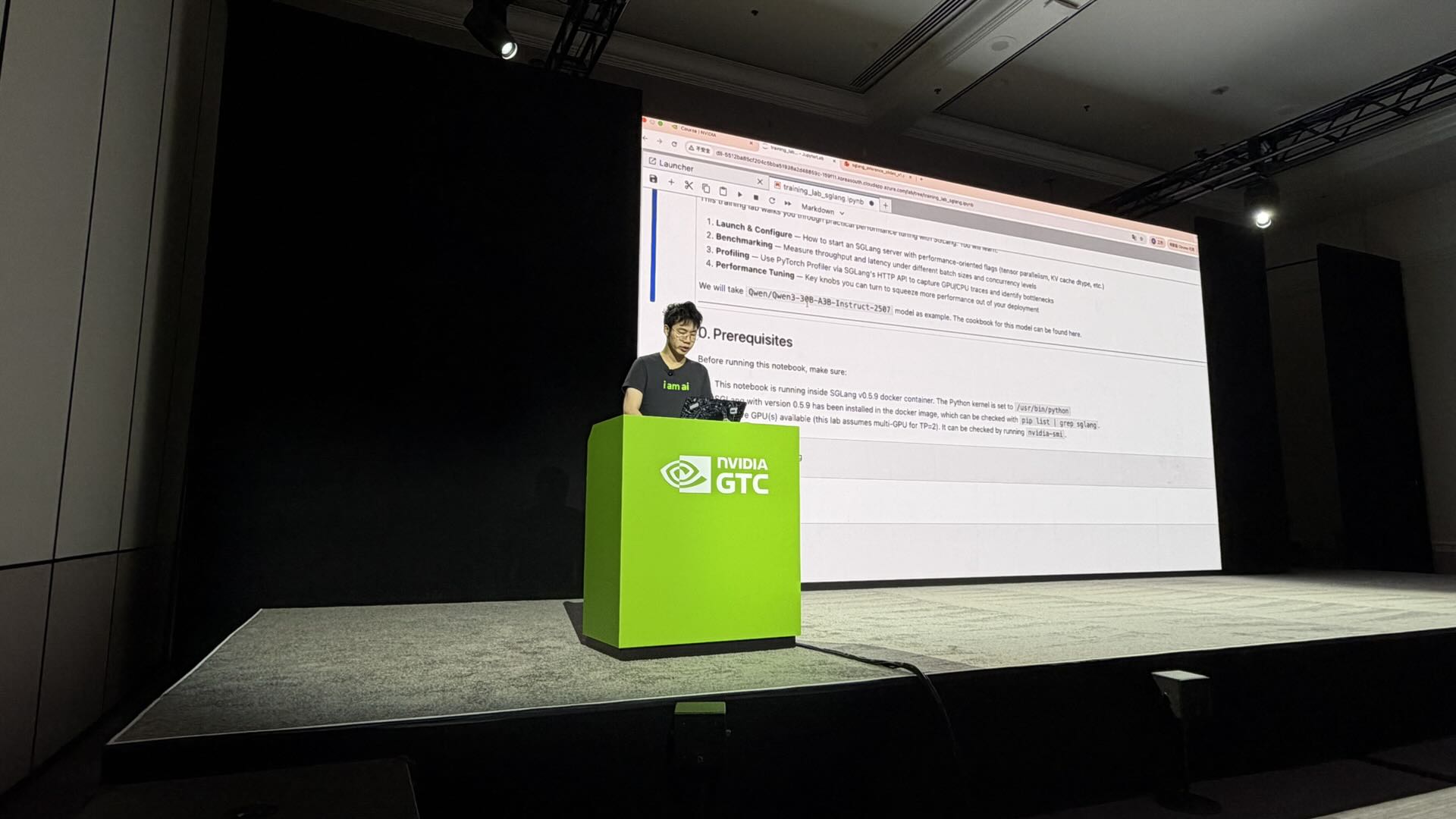

SGLang Training Lab at GTC 2026

On Thursday morning, the RadixArk team led an official GTC training lab: "High-Performance LLM Serving and Training with SGLang".

The lab covered three areas:

- Performance tuning with the SGLangCookbook: practical techniques for improving serving throughput and latency in real deployments

- Profiling and bottleneck analysis: a developer-oriented walkthrough of identifying and resolving performance bottlenecks in LLM serving systems

- SGLang × Miles RL integration: a live demonstration of running SGLang as the inference backend inside a real RL training loop using the Miles framework

The SGLang Training Lab at GTC 2026: hands-on LLM performance tuning and RL training.

🎬 Watch full recording on NVIDIA On-Demand

📁 Download the training lab materials

Side Events

SGLang × RadixArk GTC Happy Hour

On Tuesday evening, SGLang and RadixArk co-hosted a GTC Happy Hour that brought together builders, researchers, and founders from across the inference and training ecosystem, including friends from OpenAI, xAI, DeepMind, Meta, NVIDIA, Ollama, and more.

SGLang × RadixArk Happy Hour.

The evening featured two technical spotlights:

- Banghua Zhu (RadixArk) introduced RadixArk and Miles, SGLang's native RL training framework purpose-built for large-scale MoE post-training workloads.

- Jason Zhao (ScitiX) presented SiMM, an open-source in-memory KV cache engine integrated with SGLang for long-context serving.

Banghua introducing RadixArk and the Miles RL framework.

Thank you to Z Potentials and ScitiX for sponsoring the event and making it possible.

Banghua at Novita's GTC Event

Banghua Zhu joined Novita's GTC event with over 700 attendees. The discussion covered Jensen Huang's remarks on the inflection point between inference cost and demand, the key drivers behind the agentic AI movement, and what it takes for AI products to deliver real value. Banghua shared his perspective on how SGLang is shaping the future of inference infrastructure, enabling next-generation use cases from OpenClaw to agentic inference, and driving the evolution of open models and open infrastructure.

Banghua presenting at Novita's GTC event.

Partners represented included NVIDIA, RadixArk, OpenRouter, Google DeepMind, Kimi (Moonshot AI), Alibaba Cloud, MiniMax, Z.ai, Hugging Face, and Kilo Code.

LinkedIn × SGLang Meetup: LLMs for Search & Recommendation

On Wednesday evening, we hosted approximately 200 engineers at LinkedIn's Mountain View headquarters alongside teams from LinkedIn, TikTok, Meta, and NVIDIA for a deep dive into production LLM systems for search and recommendation.

SGLang swag at the LinkedIn meetup.

LinkedIn Engineering Talks

LinkedIn opened with three engineering presentations:

- Fedor Borisyuk: Semantic search at scale

- Zhipeng Wang: Modeling optimizations for LLM-driven ranking

- Sundara Raman Ramachandran: LLM inference infrastructure optimizations, including a prefill-only serving path delivering 2–3× throughput gains on H100s, upstreamed back to SGLang

LinkedIn engineers presenting on semantic search, ranking, and inference infrastructure.

Relevant work from LinkedIn's engineering team: [1] [2] [3] [4] [5] [6] [7]

SGLang: Roadmap and Miles Framework

SGLang core developer Liangsheng Yin walked through SGLang's H1 2026 roadmap.

Mao Cheng then presented the Miles RL framework, addressing training–inference mismatch in production through three core techniques:

- Importance sampling corrections: compensating for distribution shift between training and inference

- Inference-training alignment: ensuring consistency between rollout behavior and gradient updates

- Rollout Routing Replay (R3): replay-based routing for efficient use of generated rollout data

Mao Cheng presenting the Miles RL framework and its approach to training–inference alignment.

Industry Speakers

- Hongyu Lu (TikTok): LLM search at scale

- Luke Simon and Xi Liu (Meta): Generative Reasoning Reranker [paper link]

- Anish Maddipoti (NVIDIA): Dynamo + NeMoRL

Panel Discussion

The closing panel, hosted by Qing Lan, featured Wenfeng Zhuo, Fedor Borisyuk, Luke Simon, and Mao Cheng. Topics included:

- Semantic ID vs. embedding retrieval

- Whether unified retrieval + ranking (OneRec-style systems) is production-ready

- Inference and training challenges in LLM recsys

- Recent breakthroughs accelerating LLM adoption for recommendations

- The role of continuous learning in production recommendation systems

The closing panel: Wenfeng Zhuo, Fedor Borisyuk, Luke Simon, and Mao Cheng, moderated by Qing Lan.

This is exactly the kind of collaboration that will define the next generation of recommendation systems: production teams and open-source infrastructure co-evolving together.

Looking Ahead

GTC 2026 made clear how much the production ecosystem is converging around open-source infrastructure. From semantic search at LinkedIn scale to RL post-training for frontier MoE models, SGLang is increasingly the shared layer underneath.

We'll keep building in the open. Follow our Luma calendar for future meetups, office hours, and community events.